By Paulo Freitas de Araujo Filho

This is the fifth and final blog post of our series “Empowering Intrusion Detection Systems with Machine Learning”, in which we discuss the use of machine learning in intrusion detection systems (IDSs). In the previous posts, we discussed the differences between signature and anomaly-based intrusion detection, and three unsupervised techniques for detecting intrusions: clustering, one-class novelty detection, and autoencoders. Now, we present a fourth technique, namely, generative adversarial networks (GANs) [1], and explain how it can be used to detect malicious activities in anomaly-based IDSs.

Generative Adversarial Networks

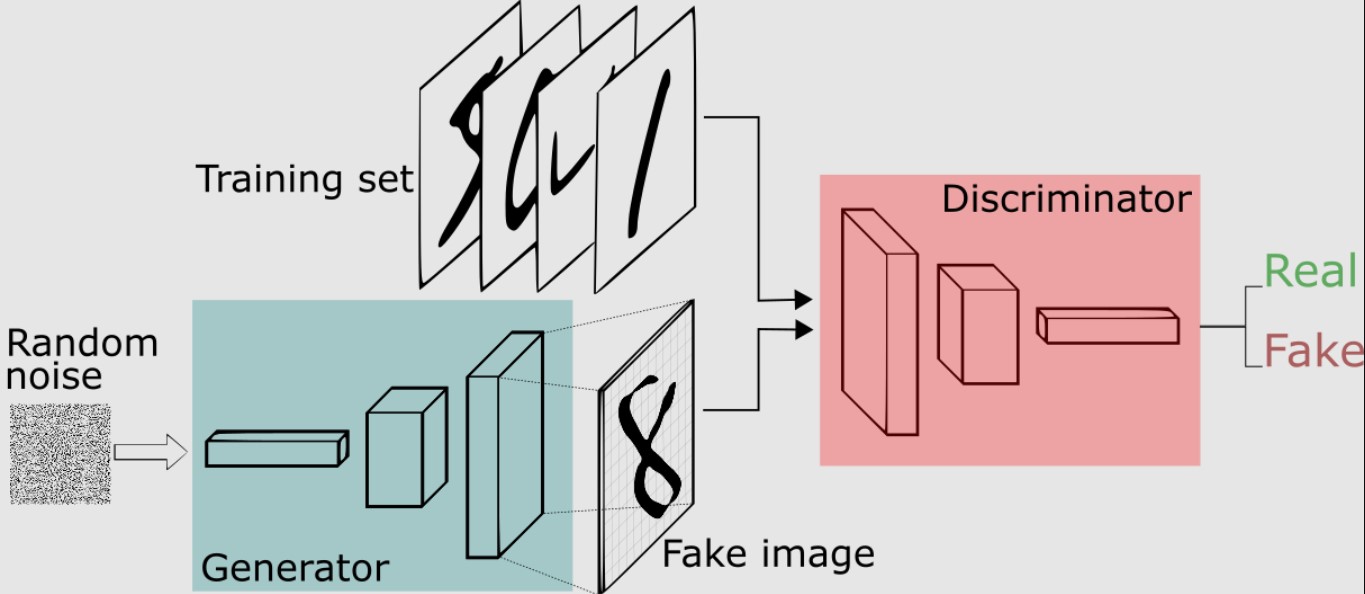

Rather than being a single neural network, GANs are a framework that consists of two neural networks: generator and discriminator. These networks have different goals and compete with each other in an adversarial training process so that when one of them gets better the other must improve and keep up. It is like they are two chess players so that when one of them starts winning, the other trains a bit harder to reverse the score. As a result, both players end up improving their performance and achieving better results [1]-[3].

That idea, and the concept of GANs, were originally developed in [1] for creating fake images. The authors of [1] designed a generator neural network that was capable of generating fake images that looked like real ones from random vectors. Their proposed discriminator, on the other hand, had the task of distinguishing between images that were real and those that were created by the generator.

Thus, given a set of cat images, for example, the generator starts understanding how those images look and how to produce new cat images whereas the discriminator learns how to distinguish between real and fake cat images. If the discriminator starts to get it right most of the time, the generator makes an extra effort by adjusting its weights a bit more so that it creates better cat images that the discriminator cannot recognize. Then, it is the discriminator that makes an extra effort to be able to distinguish between real and fake cat images again. That process goes on until both the generator and discriminator stabilizes. Figure 1 shows the GAN training framework for a set of handwritten digit images.

Figure 2 shows handwritten digits created by a GAN generator after training in its first five columns and real handwritten digits in its last column.

Although generating handwritten digits or cat images may seem silly, GANs are an extremely sophisticated and powerful structure. The GAN generator implicitly models the system, i.e., it implicitly learns what patterns are present in a given set of data, which allows more powerful applications [5]. For instance, Figure 3 shows a zebra that was generated from a horse using GANs.

Figure 3. Zebra generated from a horse using GANs (obtained from [5])

Detecting Intrusions with GANs

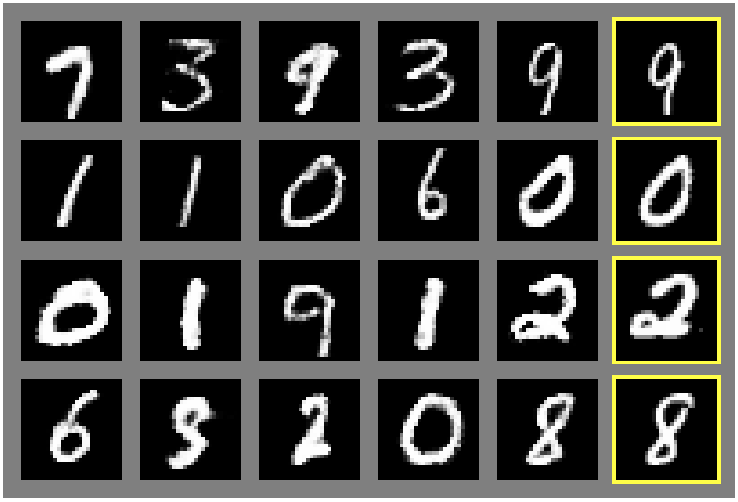

Ok, sounds interesting. But how such a GAN may be used to detect intrusions? Although GANs have been first applied on images, they can be used to identify patterns in any type of data, such as network flows, Windows’ event logs, and even measurements from sensors in a factory [2], [3]. Thus, if a GAN is trained using only bening data from networks and systems, the GAN generator will learn how those data behave and how to produce data similar to it. The discriminator, on the other hand, will learn how to distinguish between benign data and fake data produced by the generator. Wait a minute! If the discriminator distinguishes between real and fake benign data, it identifies anomalies and malicious samples even if they are similar to the benign data. Hence, the GAN discriminator can be used to detect intrusions. After training, the discriminator receives data samples and outputs a discrimination loss that corresponds to a probability or a score that indicates how likely that data represents an anomaly [2].

Moreover, recent works have shown that the GAN generator can also contribute to the detection of anomalies through the computation of a reconstruction error, which is then combined with the discrimination loss [2], [3]. The reconstruction loss corresponds to the residual error between the data sample under evaluation and its reconstructed version obtained from the GAN generator. Therefore, a GAN-based IDS may decide whether a sample under evaluation is an anomaly by combining both the discrimination and reconstruction losses into a single anomaly detection score so that samples with large anomaly detection scores are considered potentially malicious [2], [3].

The work in [2] adopted that approach and proposed FID-GAN, a GAN-based IDS for detecting cyber-attacks in a water treatment plant. It conducted experiments on three datasets: the SWaT [6] and WADI [7] datasets, which contain sensor measurements of a water treatment plant, and the NSL-KDD [8] dataset, which contains network traffic data. By combining the discrimination and reconstruction losses, FID-GAN achieved higher detection rates than when using those losses individually. Figure 4 shows the ROC curves (true positive versus false positive rates) of FID-GAN on the SWaT, WADI, and NSL-KDD datasets, respectively. The area under the curve (AUC) metric, depicted in Figure 4 represents how well samples are correctly classified as benign or malicious. Please refer to [2] for more information about FID-GAN.

Deployment

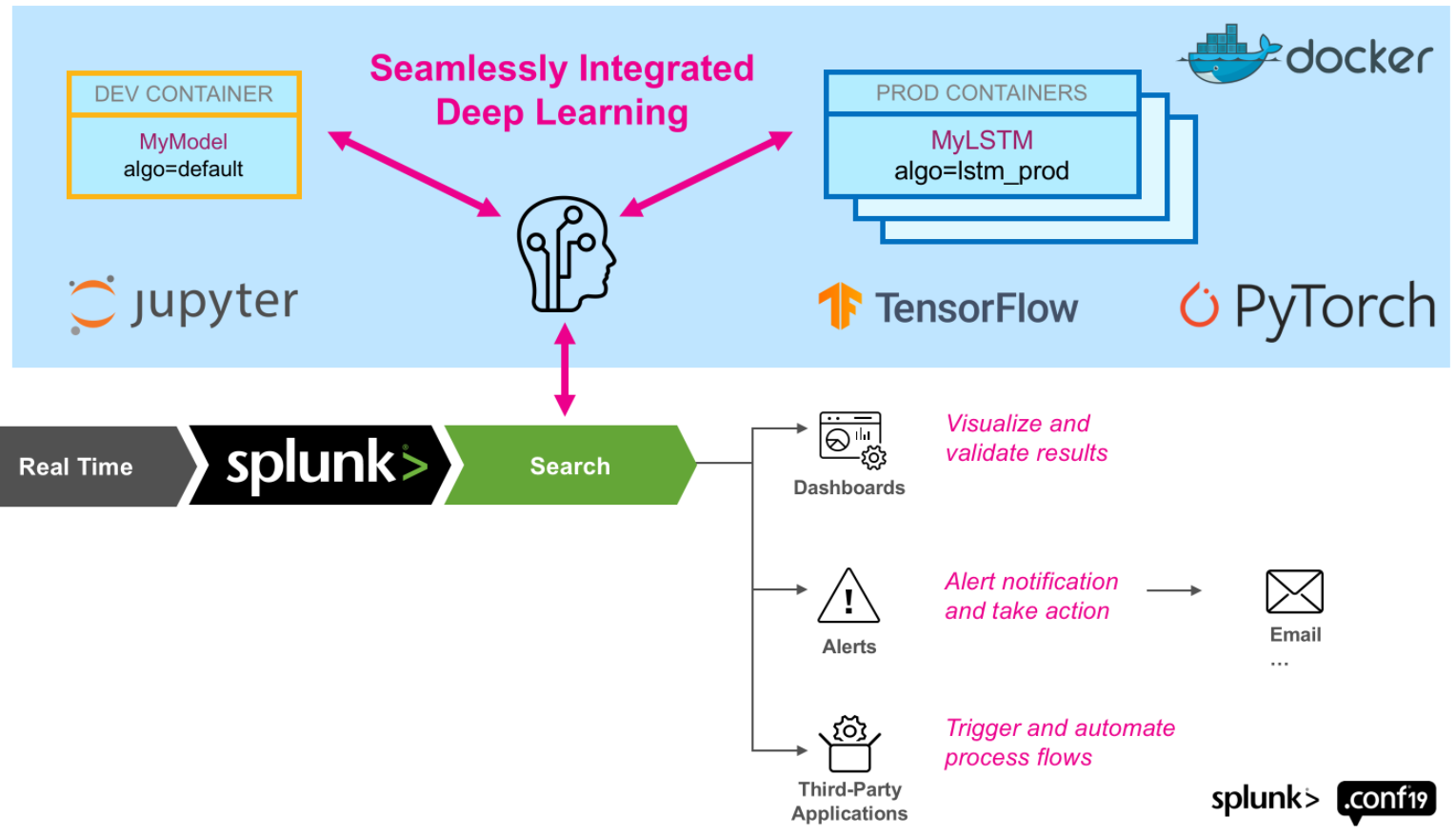

As presented in our previous blog post, which discussed IDSs based on autoencoders, Splunk offers a deep learning toolkit (DLTK) that allows us to implement and deploy deep learning algorithms. Hence, we can leverage it to deploy GAN-based IDSs, such as the one proposed in [2]. Figure 5 illustrates the integration between Splunk and a docker environment with support for the most common deep learning frameworks, TensorFlow and Pytorch. Please refer to [9] and [10] for more information.

Challenges and Drawbacks

As other algorithms, GANs detect intrusions by relying only on benign data from networks and systems. Thus, it is a very useful technique when it is difficult or expensive to obtain labeled attack data, which is a very common situation. However, by doing so, they require us to provide training samples that are free from attacks. Otherwise, the trained model could be misled to believe that malicious samples are benign.

Moreover, although GAN-based IDSs have been showing a remarkable capability for identifying anomalies that were previously very difficult to find, and thus outperforming many other IDSs [cite alguns], they are much more challenging to train. Thus, several techniques are being proposed for improving GANs and making it easier to train [11], [12].

Finally, as any other machine learning-based classifier, GAN-based IDSs are vulnerable to adversarial attacks, which craft and introduce small imperceptible perturbations that compromise the classifier’s accuracy. Therefore, while they must be enhanced to become robust against such sophisticated threats, there is yet no established solution against adversarial attacks, which is an active research field [13].

With great power comes great responsibility

As any other powerful tool, GANs may also be used for evil. Although they can produce extremely powerful IDSs, GANs may also be used to produce adversarial attacks. That is, they can be used to produce slightly modified patterns that trick IDSs that are based on machine learning to not recognize malicious samples as malicious [13]. It goes even further, GANs may also be used for crafting perturbations that trick malware detectors to not detect malwares [13], modulation classifiers to not identify the modulation scheme being used [14], and even for creating deep fakes and surpassing biometric systems [15].

Conclusion

In this post, we discussed how IDSs can leverage GANs to detect anomalies by combining a discrimination and a reconstruction loss. Moreover, we highlighted how GANs may also be used for evil! At Tempest, we are investigating and relying on such techniques to better protect your business! We hope you have enjoyed this series of blog posts! Stay tuned for our next posts and series!

References

[1] I. Goodfellow, J. Pouget-Abadie, M. Mirza, B. Xu, D. Warde-Farley, S. Ozair, A. Courville, and Y. Bengio, “Generative adversarial nets,” in Proc. of NIPS, 2014, pp. 2672–2680.

[2] P. Freitas de Araujo-Filho, G. Kaddoum, D. R. Campelo, A. Gondim Santos, D. Macêdo and C. Zanchettin, “Intrusion Detection for Cyber–Physical Systems Using Generative Adversarial Networks in Fog Environment,” in IEEE Internet of Things Journal, vol. 8, no. 8, pp. 6247-6256, 15 April15, 2021, doi: 10.1109/JIOT.2020.3024800.

[3] D. Li, D. Chen, B. Jin, L. Shi, J. Goh, and S.-K. Ng, “MAD-GAN: Multivariate anomaly detection for time series data with generative adversarial networks,” in Proc. Springer Int. Conf. Artif. Neural Netw., 2019, pp. 703–716.

[4] Deep Learning Book. “Capítulo 54 – Introdução às Redes Adversárias Generativas (GANs – Generative Adversarial Networks)”.Accessed: Jul. 21, 2022. [Online]. Available: https://www.deeplearningbook.com.br/introducao-as-redes-adversarias-generativas-gans-generative-adversarial-networks/

[5] J. Hui. “GAN — What is Generative Adversarial Networks GAN?”. Accessed: Jul. 21, 2022. [Online]. Available: https://jonathan-hui.medium.com/gan-whats-generative-adversarial-networks-and-its-application-f39ed278ef09

[6] The Secure Water Treatment (SWaT), iTrust Singapore Univ. Technol. Design (SUTD), Singapore, 2015. [Online]. Available: https://itrust.sutd.edu.sg/itrust-labs-home/itrust-labs_swat/

[7] The Water Distribution (WADI), iTrust Singapore Univ. Technol. Design (SUTD), Singapore, 2016. [Online]. Available: https://itrust.sutd.edu.sg/itrust-labs-home/itrust-labs_wadi/

[8] NSL-KDD Data Set, Can. Inst. Cybersecurity, Fredericton, NB, Canada. Accessed: Apr. 10, 2020. [Online]. Available: https://www.unb.ca/cic/datasets/nsl.html

[9] D. Federschmidt, P. Salm, L. Utz, G. Ainslie-Malik, P. Drieger, A. Tellez, P. Brunel, R. Fujara, “Splunk App for Data Science and Deep Learning (DLTK)”, Accessed: Jun. 23, 2022. [Online]. Available: https://splunkbase.splunk.com/app/4607/#/details

[10] D. Lambrou, “Splunk with the Power of Deep Learning Analytics and GPU Acceleration”, Accessed: Jun. 23, 2022. [Online]. Available: https://www.splunk.com/en_us/blog/tips-and-tricks/splunk-with-the-power-of-deep-learning-analytics-and-gpu-acceleration.html

[11] M. Arjovsky, S. Chintala, and L. Bottou, “Wasserstein generative adversarial networks,” in Proc. Int. Conf. Mach. Learn., 2017, pp. 214–223.

[12] I. Gulrajani, F. Ahmed, M. Arjovsky, V. Dumoulin, and A. Courville, “Improved training of Wasserstein GANs,” 2017, arXiv:1704.00028.

[13] J. Liu, M. Nogueira, J. Fernandes and B. Kantarci, “Adversarial Machine Learning: A Multilayer Review of the State-of-the-Art and Challenges for Wireless and Mobile Systems,” in IEEE Communications Surveys & Tutorials, vol. 24, no. 1, pp. 123-159, Firstquarter 2022, doi: 10.1109/COMST.2021.3136132.

[14] P. Freitas de Araujo-Filho, G. Kaddoum, M. Naili, E. T. Fapi and Z. Zhu, “Multi-Objective GAN-Based Adversarial Attack Technique for Modulation Classifiers,” in IEEE Communications Letters, vol. 26, no. 7, pp. 1583-1587, July 2022, doi: 10.1109/LCOMM.2022.3167368.

[15] T. T. Nguyen, C. M. Nguyen, D. T. Nguyen, D. T. Nguyen, and S. Nahavandi, “Deep learning for deepfakes creation and detection: a survey,” arXiv preprint arXiv:1909.11573, 2019.

Other articles in this series

Empowering Intrusion Detection Systems with Machine Learning

Part 1 of 5: Signature vs. Anomaly-Based Intrusion Detection Systems

Part 2 of 5: Clustering-Based Unsupervised Intrusion Detection Systems

Part 3 of 5: One-Class Novelty Detection Intrusion Detection Systems

Part 4 of 5: Intrusion Detection using Autoencoders

Part 5 of 5: Intrusion Detection using Generative Adversarial Networks